|

5/16/2023 0 Comments Stockfish chess vs magnus

We also recognised some of the PCA tournaments, and a Kramnik match, before FIDE reunified in 2007. Likewise, the World Championship crown was briefly split between two competing organisations, and we had to consider how to treat that split.įollowing most modern commentators, we decided the first historic match which was worthy of the World Championship title was played in 1886. FIDE has only existed since 1924, but there are historic matches unofficially treated as World Championships. The first step was deciding what counted as World Championship matches, then collecting them all, and compiling them. We decided to run all World Championship games through Stockfish 14 NNUE, to try and rank their accuracy, and see if our initial claim was correct. With that background out of the way, on to our process. The average centipawn loss (ACPL) measures this centipawn loss across an entire game - so the lower the ACPL a player has, the more perfectly they played, in the eyes of the engine assessing it. It doesn’t necessarily mean they actually physically lost a pawn - a loss of space or a worse position could be the equivalent of giving up a pawn physically. For example, if a move lost 100 centipawns, that’s the equivalent of a player losing a pawn. To help evaluate the accuracy of positions and gameplay, engines present their evaluation in centipawns (1/100th of a pawn).

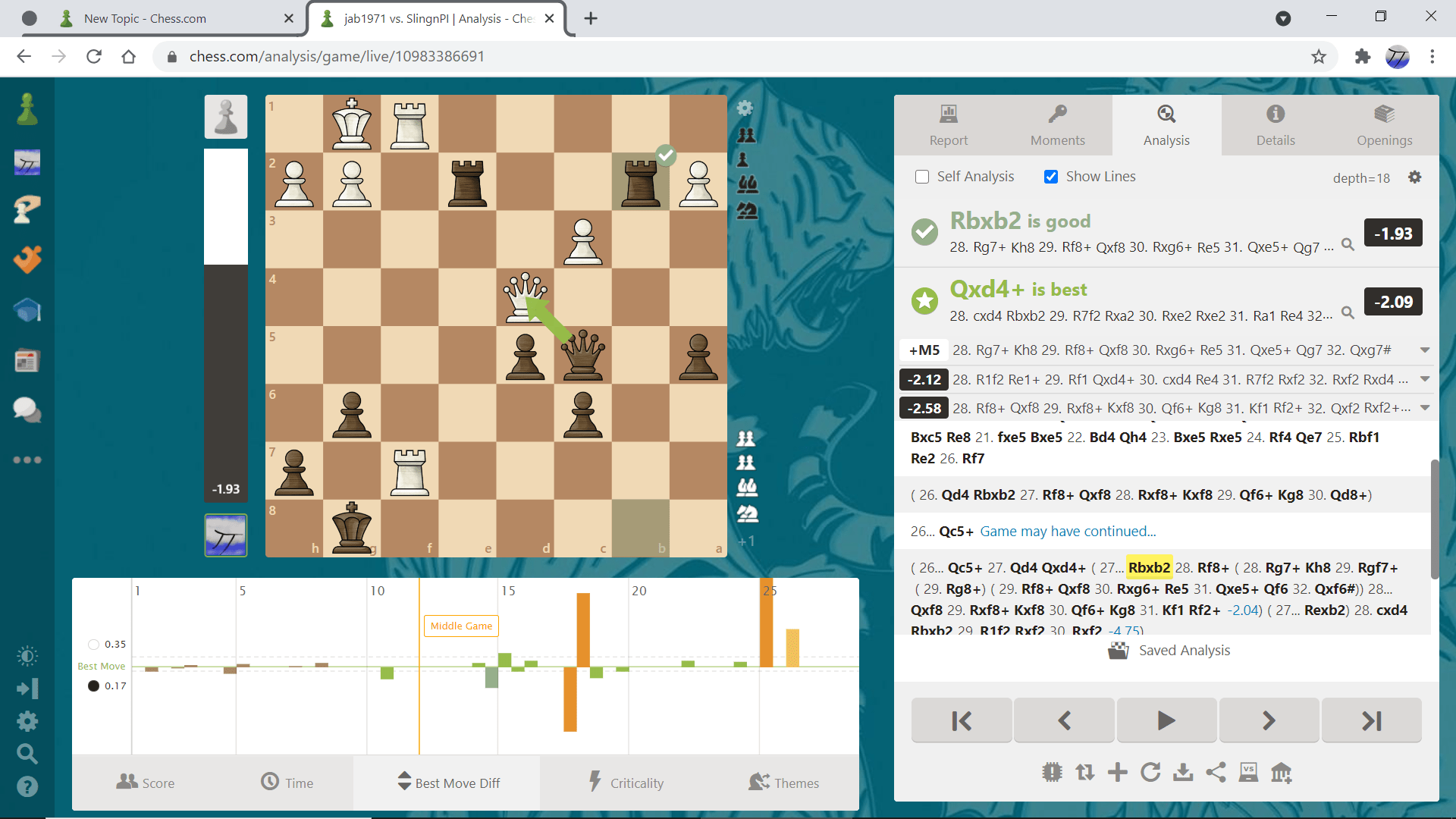

Lichess's evaluation of the Round 3 game using Stockfish 14 NNUE The latest version of this fruitful cooperation is known as Stockfish 14 NNUE, which is the chess engine Lichess uses for all post-game analysis when a user requests it, and the chess engine we used to measure the accuracy of all World Championship games. Consequently, the Neural Network method of assessing and evaluating positions that was initially used in Shogi engines was eventually implemented in Stockfish, later also in collaboration with another popular and powerful chess engine inspired by AlphaZero, called Lc0 (or Leela chess Zero). Stockfish 8 (the number referring to the version of the software) was completely annihilated by AlphaZero, in what remains to be a somewhat controversial matchup.īut even if Stockfish really had been fighting with one arm behind its back, it was still clearly outclassed. Stockfish’s community had made it one of the strongest chess engines in the world, able to trivially beat the strongest chess players in the world running on a mobile phone, when it was pitted against Google DeepMind’s AlphaZero in 2017. Stockfish is the name of one such chess engine - which just so happens to be free, open source software (just like Lichess). Chess software (now known as “chess engines”) continued to improve, and became capable of assessing and evaluating the best lines of chess to be played. Since then, the software has improved significantly, with Deep Blue created by IBM famously defeating GM Garry Kasparov in 1997 - a landmark moment in popular culture where it seemed machines had finally overtaken humans. Note the many cupboards filled with hardware wired up to it The Ferranti Mk 1 computer, the model Turochamp ran on. Too complex for computers of the day to run, it played its first game in 1952 (and lost in under 30 moves against an amateur). The father of modern computing, Alan Turing, was the first recorded to have tried, creating a programme called Turochamp in 1948. So, let’s discuss computer analysis, chess engines, and ACPL briefly.Īlmost since computers have existed, programmers have tried creating software which can play chess. Some may be feeling confused by what computer analysis is, or what ACPL really means. A Brief History of Chess Engines and ACPLīut first, let’s take a step back. So, our man on the ground in Dubai felt comfortable asking “how do both players feel, having played what appears to be one of the most accurate FIDE World Championship games played in history, as assessed by engines?” At the time, the team had only manually checked through the 2010s - but after getting the answers from the players, we decided to fact-check ourselves and investigate the question more deeply. For various reasons, some of our team know the lifetime average centipawn loss (ACPL) of some top players throughout history, and of previous FIDE World Championship matches. So we immediately knew that the accuracy as determined by computer analysis did indeed seem to be really quite low, even by super-GM standards: 2 ACPL for Magnus Carlsen, and 3 ACPL for Ian Nepomniachtchi. excitable (particularly with what it thinks are blunders), but we decided to check it out. The broadcast chat can occasionally get a bit. After round 3 of the FIDE World Championship 2021 came to a draw between GM Magnus Carlsen and GM Ian Nepomniachtchi, the Lichess broadcast chat immediately pounced upon the incredible accuracy the two players displayed.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed